The most common mistake people make when building AI workflows isn't choosing the wrong tool. It's trying to get AI to do everything — including the parts it's bad at.

AI is exceptional at reasoning, synthesizing information, generating language, and handling ambiguity. It is not reliable at structured data manipulation, sequential logic, or tasks where consistency is non-negotiable. When you ask AI to do both, accuracy compounds downward fast.

Here's the math: if each step in a five-step all-AI workflow is 90% accurate, your end-to-end success rate is 0.9⁵ — about 59%. That's a system that fails roughly 4 out of 10 times. Not acceptable for a business-critical process.

A five-step AI-only workflow at 90% accuracy per step — fails 4 in 10 times

The WAT Framework was designed to fix this. It's the architecture we use at MAXXAM AI for every automation we build — from simple appointment reminders to multi-step agentic systems. The core idea is simple: let AI handle reasoning, let deterministic code handle execution.

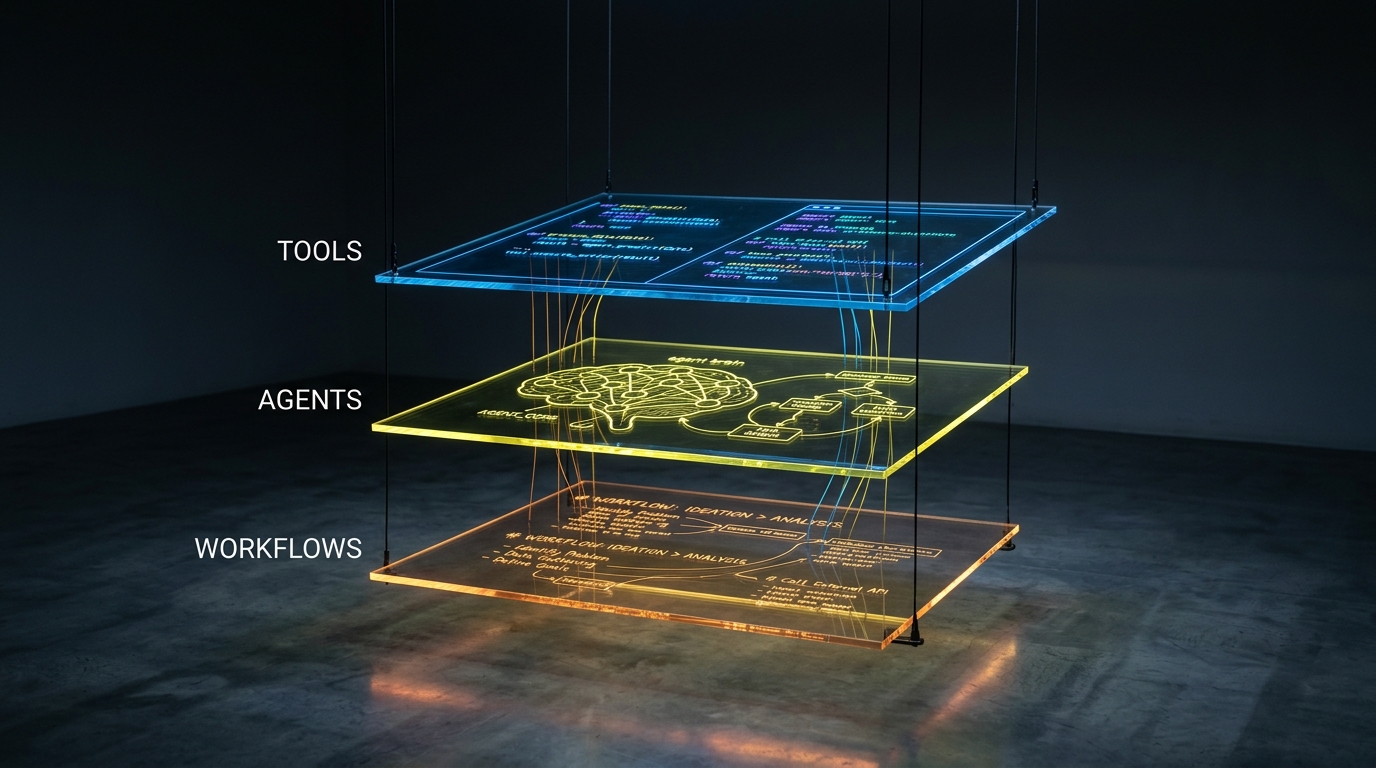

The Three Layers of WAT

The Instructions

Workflows are plain-language SOPs (Standard Operating Procedures) stored as markdown files. Each workflow defines: what the objective is, what inputs are required, which tools to use, what the expected output looks like, and how to handle edge cases. Think of them as the briefing document you'd give a new team member. They don't execute anything — they tell the agent exactly what to do and in what order.

The Decision-Maker

The agent (an LLM like Claude) reads the workflow, makes decisions about how to execute it, handles failures gracefully, and coordinates tools in the right sequence. The agent doesn't do the actual work — it orchestrates. It decides what to do next based on context, handles unexpected situations, and asks for clarification when inputs are ambiguous. This is where AI earns its place: reasoning, adapting, problem-solving.

The Execution Layer

Tools are deterministic scripts — Python functions, API calls, n8n nodes — that do the actual work. Send the SMS. Query the database. Parse the CSV. Write to the CRM. These scripts are consistent, fast, and testable. They don't hallucinate. They don't interpret. They execute one specific action reliably, every time. When something fails, the error is clear and reproducible.

The separation principle: Probabilistic AI handles reasoning. Deterministic code handles execution. The agent decides what to do. The tool does it.

Why This Architecture Works

The WAT framework solves the compounding accuracy problem by isolating where uncertainty lives. AI reasoning happens in Layer 2 — one decision point. Once the agent decides to call a tool, that tool executes with 100% consistency. Errors become diagnosable instead of mysterious.

When something breaks in a WAT system, you know exactly where to look:

- Wrong outcome from a tool? The tool script has a bug. Fix it once, it's fixed everywhere.

- Agent called the wrong tool? The workflow instructions are ambiguous. Update the workflow.

- Tool called correctly but data was wrong? The agent's reasoning step needs better context or constraints.

This is dramatically easier to debug than a workflow where AI is making and executing decisions simultaneously across every step.

A Real Example: Automated Lead Follow-Up

Here's how WAT maps to a concrete business automation — a lead follow-up sequence triggered when someone submits a contact form.

Without WAT (AI does everything)

A single AI prompt receives the form submission and is asked to: extract the lead's information, look up whether they're already in the CRM, compose a personalized follow-up email, send it, log the interaction, and schedule a reminder if they don't respond. Seven responsibilities. Seven chances for the AI to hallucinate, misformat data, or silently fail.

With WAT

The same workflow, split correctly:

- Workflow defines the SOP: "When a lead submits a form, check if they're in the CRM. If not, create a record. Compose a personalized first response. Send it. Log it. Schedule a follow-up in 48 hours if no reply."

- Agent reads the workflow, orchestrates the sequence, and composes the personalized email using the lead's information — that's the reasoning task it's genuinely good at.

- Tools handle: CRM lookup (API call), CRM record creation (API call), email send (SMTP or SendGrid), task scheduling (n8n delay node), logging (write to sheet or CRM).

The agent does one reasoning task: write a personalized email. Every other step is a deterministic tool call. The system runs reliably at scale because the AI is only asked to do what AI is actually good at.

How to Apply WAT to Your Business

You don't need to be a developer to think in WAT. Here's the mental model:

Step 1: Write the Workflow First

Before touching any tool, write out what needs to happen in plain language. What triggers the process? What should happen next? What are the edge cases? This is just a numbered list to start — you'll refine it. The act of writing it forces clarity about what you're actually trying to automate.

Step 2: Identify What Requires Reasoning vs. Execution

Go through your workflow step by step. Ask: does this step require judgment, interpretation, or language generation? If yes — that's the agent's job. Does this step require a consistent, predictable action? If yes — that's a tool.

Judgment/interpretation → Agent. Consistent action → Tool.

Step 3: Build the Tools First

Build your deterministic execution layer before you add AI. Test each tool in isolation. An n8n node that sends an SMS should work perfectly on its own before you wire it to any AI decision-making. Tools should be boring, reliable, and individually testable.

Step 4: Add the Agent Layer

Once your tools work reliably, connect them to your agent with a clear workflow document. Give the agent enough context to make good decisions — and clear constraints so it doesn't improvise where you need consistency.

Step 5: Test End-to-End, Then Monitor

Run the full workflow on test data before going live. After launch, log both agent decisions and tool outputs separately. When something goes wrong — and it will, occasionally — you'll know exactly which layer to look at.

When to Add AI vs. When to Keep It Simple

Not every automation needs an AI agent. The WAT framework works at any level of complexity:

- Simple automation (appointment reminder): Workflow + Tools only. No AI reasoning needed. Trigger fires → tool sends SMS.

- Moderate automation (personalized follow-up): Workflow + Agent (for personalization) + Tools (for delivery and logging).

- Complex automation (agentic research and outreach): Workflow + Agent (for multi-step reasoning, content generation) + multiple Tools (data sources, CRM, messaging, scheduling).

Rule of thumb: Start with the simplest version that works. Only add AI reasoning where the output genuinely varies based on context. If every input should produce the same output — that's a tool, not an agent.

The Self-Improvement Loop

One of the biggest advantages of the WAT framework is that it improves over time. Every time a tool fails, you fix the script and the fix applies everywhere that tool is used. Every time an agent makes a poor decision, you update the workflow with better instructions. The system gets smarter and more reliable with each iteration — not because the AI gets better, but because the instructions and execution layer get cleaner.

This is how we approach every MAXXAM AI client engagement: build the workflow, build the tools, add the agent, run it, improve it. The framework is how we deliver systems that keep working six months after we build them.

AI automation doesn't have to be fragile. When you separate reasoning from execution — when AI does what it's good at and deterministic code does what it's good at — you get systems that are reliable enough to run your business on.

That's the WAT framework. And now you know how to use it.